AI as Societal Infrastructure

2025-08-01

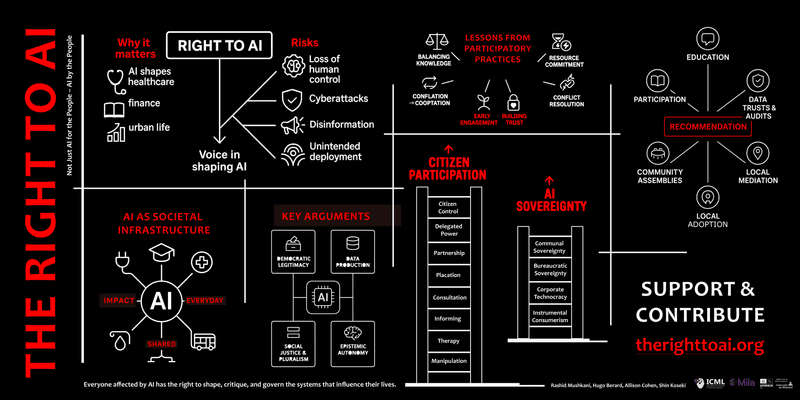

The Right to AI begins from a basic premise: if AI is becoming infrastructure, the public should help govern it (Mushkani et al., 2025).

Why This Claim Matters

The present AI landscape is still dominated by a narrow set of firms and institutions, while the people most affected by automated systems are often invited in late, if at all. That imbalance is not only a democratic problem. It is also a design problem, because systems built without meaningful public influence are more likely to harden bias, miss local realities, and weaken trust.

The paper argues that AI should be understood less as a consumer product and more as societal infrastructure: something closer to transit, housing systems, schools, or public space in the scale of its social consequences. Once AI is seen that way, questions of ownership, access, accountability, and public stewardship move from the margins to the center.

What The Paper Does

The paper sets out a research agenda for treating AI as part of public life rather than as a sealed technical product. It asks how communities can shape AI systems before those systems become entrenched and how participation can change design, data governance, oversight, and deployment decisions in concrete ways.

What The Paper Argues

The paper draws on Lefebvre's Right to the City to argue for a Right to AI: the capacity and entitlement of individuals and communities to shape, critique, and govern the AI infrastructures that affect their lives (Mushkani et al., 2025). It treats data as socially produced, questions market-led and state-centric models that leave the public at a distance, and proposes participatory alternatives such as local councils, data trusts, stakeholder-led oversight, and conflict-resolution mechanisms that recognize plural values rather than assuming one universal public interest.

What matters in that argument is its insistence that participation must do real work. It should not stop at comment boxes or consultation theater. It should shape whether systems are built, how data is governed, who can inspect harms, and what forms of redress are available.

From Consultation To Power

The paper adapts the logic of participation ladders to AI governance. At the bottom are models that treat people as consumers, perhaps informed but largely powerless. Higher up are approaches that invite some transparency or consultation while retaining centralized control. At the top are arrangements closer to shared governance, where communities and experts help decide the goals, risks, and limits of the system together.

That framework is useful because it clarifies a mistake that appears constantly in AI governance: mistaking visibility for power. Being asked for feedback is not the same thing as having standing. Reading a policy is not the same thing as being able to contest it. The Right to AI keeps pressing on that difference.

What Comes Next

I see the next phase in practical terms: better public AI literacy, stronger local participation tools, and governance arrangements that can move from advisory rituals toward durable public authority.

Explore More

The Right to AI — nonprofit

About The Right to AI

Book: The Right to AI

References

Mushkani, R., Berard, H., Cohen, A., & Koseki, S. (2025). Position: The Right to AI. arXiv. https://arxiv.org/abs/2501.17899